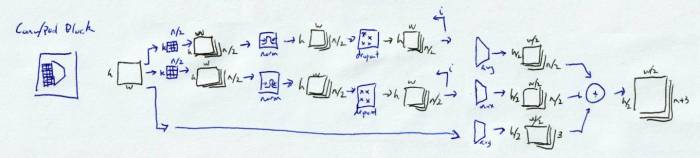

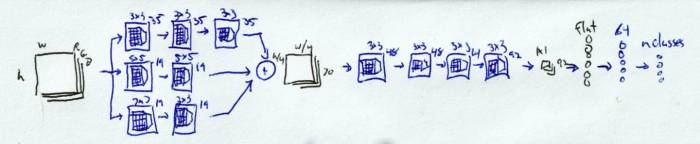

After some interesting reads, I implemented a

convolution+pooling block inspired by ResNet. It looks like this:

An w-by-h image is convolved (with normalization and droput) i-times, then the maxpool and average pool are concatenated with an average pool of the input to produce kernel_count + 3 output

feature maps of size w/2-by-h/2.

Historically I've thrown together models on the fly (like a lot of example code). Having (somewhat erroneously, read on) decided that batch normalization and dropout are good to sprinkle in everywhere, I

combined them all into a single subroutine that can be called from main(). It also forced me to name my layers.

__________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==========================================================================

__________________________________________________________________________

conv3_0_max_0_conv (Conv2D) (None, 256, 256, 16) 448 input[0][0]

__________________________________________________________________________

conv3_0_avg_0_conv (Conv2D) (None, 256, 256, 16) 448 input[0][0]

__________________________________________________________________________

conv3_0_max_0_norm (BatchNorm (None, 256, 256, 16) 64

conv3_0_max_0_conv[0][0]

__________________________________________________________________________

conv3_0_avg_0_norm (BatchNorm (None, 256, 256, 16) 64

conv3_0_avg_0_conv[0][0]

__________________________________________________________________________

conv3_0_max_0_drop (Dropout) (None, 256, 256, 16) 0

conv3_0_max_0_norm[0][0]

__________________________________________________________________________

conv3_0_avg_0_drop (Dropout) (None, 256, 256, 16) 0

conv3_0_avg_0_norm[0][0]

__________________________________________________________________________

conv3_0_max_1_conv (Conv2D) (None, 256, 256, 16) 2320

conv3_0_max_0_dropout[0][0]

__________________________________________________________________________

conv3_0_avg_1_conv (Conv2D) (None, 256, 256, 16) 2320

conv3_0_avg_0_dropout[0][0]

__________________________________________________________________________

conv3_0_max_1_norm (BatchNorm (None, 256, 256, 16) 64

conv3_0_max_1_conv[0][0]

__________________________________________________________________________

conv3_0_avg_1_norm (BatchNorm (None, 256, 256, 16) 64

conv3_0_avg_1_conv[0][0]

__________________________________________________________________________

conv3_0_max_1_drop (Dropout) (None, 256, 256, 16) 0

conv3_0_max_1_norm[0][0]

__________________________________________________________________________

conv3_0_avg_1_drop (Dropout) (None, 256, 256, 16) 0

conv3_0_avg_1_norm[0][0]

__________________________________________________________________________

conv3_0_dense (Dense) (None, 256, 256, 3) 12 input[0][0]

__________________________________________________________________________

conv3_0_maxpool (MaxPool2D) (None, 128, 128, 16) 0

conv3_0_max_1_dropout[0][0]

__________________________________________________________________________

conv3_0_avgpool (AvgPooling (None, 128, 128, 16) 0

conv3_0_avg_1_dropout[0][0]

__________________________________________________________________________

conv3_0_densepool (AvgPool (None, 128, 128, 3) 0

conv3_0_dense[0][0]

__________________________________________________________________________

conv3_0_concatenate (Concat (None, 128, 128, 35) 0

conv3_0_maxpool[0][0]

conv3_0_avgpool

[0][0]

conv3_0_densepo

ol[0][0]

__________________________________________________________________________

Due diligence

Last month I

mentioned concatenate layers and an article about better loss metrics for super-resolution and autoencoders. Earlier this month I

posted some samples of merging layers. So I decided to put those to use.

The author of the loss article (Christopher Thomas BSc Hons. MIAP, whom I will refer to as 'cthomas') published a few more discussions of upscaling and inpainting. His results (example above) look incredible. While an amateur ML enjoyer such as myself doesn't have the brains, datasets, or hardware to compete with the pros, I'll settle for middling results and a little bit of fun.

|

This is part of a series of articles I am writing as part of my ongoing learning and research in Artificial Intelligence and Machine Learning. I'm a software engineer and analyst for my day job aspiring to be an AI researcher and Data Scientist.

I've written this in part to reinforce my own knowledge and understanding, hopefully this will also be of help and interest to others. I've tried to keep the majority of this in as much plain English as possible so that hopefully it will make sense to anyone with a familiarity in machine learning with a some more in depth technical details and links to associates research.

|

The only thing that makes cthomas's articles less approachable is that he uses

Fast AI, a tech stack I'm not familiar with. But

the concepts map easily to Keras/TF, including this great explanation of upscaling/inpainting:

|

To accomplish this a mathematical function takes the low resolution image that lacks details and hallucinates the details and features onto it. In doing so the function finds detail potentially never recorded by the original camera.

|

One model to rule them all

Between at-home coding and coursework, I've bounced around between style transfer, classficiation, autoencoders, in-painters, and super-resolution. I still haven't gotten to GANs, so it was encouraging to read this:

|

Super resolution and inpainting seem to be often regarded as separate and different tasks. However if a mathematical function can be trained to create additional detail that's not in an image, then it should be capable of repairing defects and gaps in the the image as well. This assumes those defects and gaps exist in the training data for their restoration to be learnt by the model.

...

One of the limitations of GANs is that they are effectively a lazy approach as their loss function, the critic, is trained as part of the process and not specifically engineered for this purpose. This could be one of the reasons many models are only good at super resolution and not image repair.

|

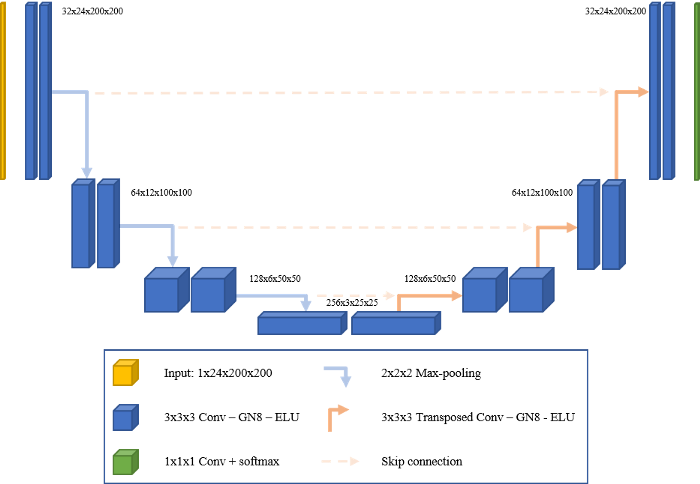

Cthomas's model of choice is U-net:

Interestingly, this model doesn't have the flattened (latent) layer that canonical autoencoders use. I went the same direction in early experiments with much simpler models:

Me Me |

I wasn't sure about the latent layer so I removed that, having seen a number of examples that simply went from convolution to transpose convolution.

|

Next level losses

|

A loss function based on activations from a VGG-16 model, pixel loss and gram matrix loss

|

Instead of a more popular GAN discriminator, cthomas uses

a composite loss calculation that includes activations of specific layers in VGG-16. That's pretty impressive.

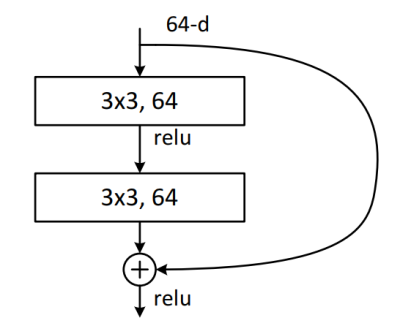

Residual

|

|

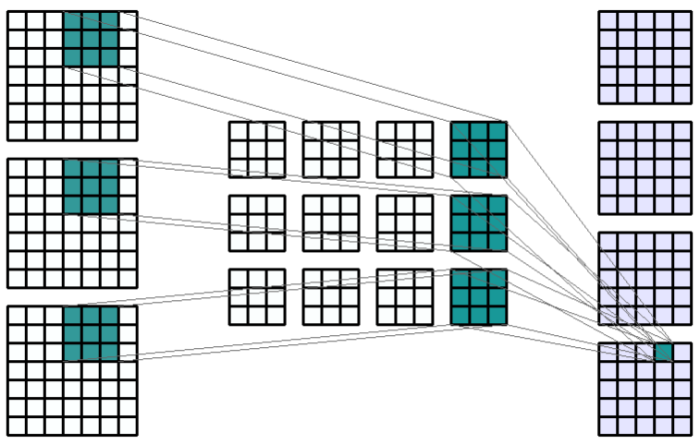

Source. A ResNet convolution block. |

U-net and the once-revolutionary ResNet architectures use

concatenation to propagate feature maps beyond convolutional blocks. Intuitively, this lets each subsequent layer see a less-processed representation of the input data. This could allow deeper kernels to see features that would otherwise have been convolved/maxpooled away, but it simultaneously could mean there are fewer kernels to interpret the structures created by the interstitial layers.

|

|

Source. ResNet-34 and its non-residual equivalent. |

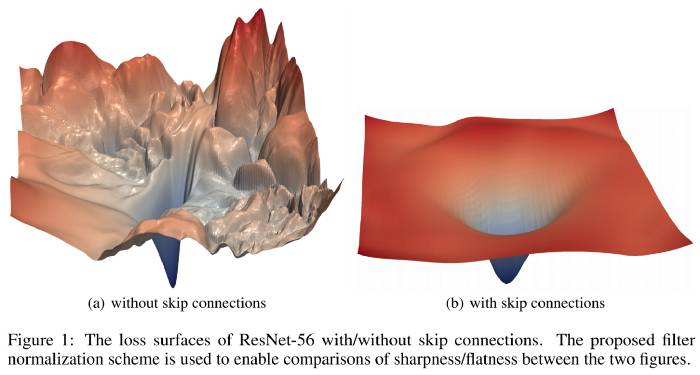

I think there was mention of error gradients getting super-unuseful as networks get deeper and deeper, but that skip connections are a partial remedy.

|

|

Source. An example loss surface with/without skip-connections. The 'learning' part of machine learning amounts to walking around that surface with your eyes closed (but a perfect altimeter and memory), trying to find the lowest point. |

Channels and convolution

|

|

Source. Click the source link for the animated version. |

Something I hadn't ever visualized:

- Convolutional layers consist of kernels, each of which is a customized (trained) template for 'tracing' the input into an output image (feature map, see the gif linked in the image caption at the top).

- The output of a convolutional layer is n-images, where n is the number of kernels.

So the outermost convolutional layer sees one monochrome image per color channel and spits out a monochrome image per kernel. So if I understand it correctly, the

RGB (or CMYK or YCbCr) data doesn't structurally survive past the first convolution. What's more, based on the above image, the output feature maps are created by summing the RGB output.

It seems like there would be

value in retaining structurally-separated channel data, particularly for color spaces like HSV and YCbCr where the brightness is its own channel.

Training should ultimately determine the relevant breakdown of input data, but for some applications the model might be hindered by its input channels getting immediately tossed into the witch's cauldron.

Trying it out

My conv-pool primitive mentioned at the top of the post looks like this:

def conv_pool(input_layer,

prefix,

kernels,

convolutions=2,

dimensions=(3,3),

activation_max='relu',

activation_avg='relu'):

'''

Creates a block of convolution and pooling with max pool branch, avg

pool branch, and a pass through.

-> [Conv2D -> BatchNormalization -> Dropout] * i -> MaxPool ->

-> [Conv2D -> BatchNormalization -> Dropout] * i -> AvgPool ->

Concatenate ->

-> Dense -> .................................... -> AvgPool ->

'''

max_conv = input_layer

avg_conv = input_layer

for i in range(convolutions):

max_conv = conv_norm_dropout(max_conv,

prefix + '_max_' + str(i),

int(kernels / 2),

dimensions,

activation=activation_max,

padding='same')

avg_conv = conv_norm_dropout(avg_conv,

prefix + '_avg_' + str(i),

int(kernels / 2),

dimensions,

activation=activation_avg,

padding='same')

max_pool = layers.MaxPooling2D((2, 2), name=prefix + '_maxpool')

(max_conv)

avg_pool = layers.AveragePooling2D((2, 2), name=prefix + '_avgpool')

(avg_conv)

dense = layers.Dense(3, name=prefix + '_dense')(input_layer)

input_pool = layers.AveragePooling2D((2, 2), name=prefix +

'_densepool')(dense)

concatenate = layers.Concatenate(name=prefix + '_concatenate')

([max_pool, avg_pool, input_pool])

return concatenate

The input is passes through three branches:

- One or more convolutions with a max pool

- One or more convolutions with an average pool

- Skipping straight to an average pool

These are concatenated to produce the output. Having seen feature maps run off the edge of representable values, I used BatchNorm everywhere. I also used a lot of dropout because overfitting.

Why did I use both max pool and average pool? I get the impression that a lot of machine learning examples focus on textbook object recognition problems and are biased toward edge detection (that may be favored by both relu and max pooling).

|

|

|

My updated classifier, each of those sideways-house-things is the 17-layer conv-pool block described above. |

Autoencoder/stylizer

I then used the conv-pool block for

an autoencoder-ish model meant to learn image stylization that I first attempted

here.

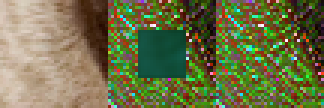

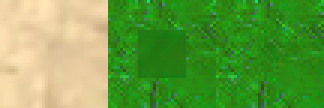

Cropping and colorize

|

|

|

The 36-pixel -> 16-pixel model described above applied to an entire image. |

When you're doing convolution,

the edges of an image provide less information than everywhere else. So while it makes the bookkeeping a little harder, outputting a smaller image means higher confidence in the generated data.

In previous experiments I used downscaling to go from larger to smaller. I hypothesized that deeper layers would learn to ignore the original boundaries but still use that information in constructing its output. While that doesn't make a ton of sense when you think about a convolutional kernel sliding across a feature map, I tossed in some dense layers thinking they'd have positional awareness.

Anyway, the right answer is to let your convolutional kernels see the whole input image (and thereby be able to reconstruct features that would otherwise be cropped) and

then use a Cropping2D layer to ensure you have a fully-informed output.

The format throughout:

- Left: input (unstylized).

- Middle: the model's guess (center box) pasted over the answer key.

- Right: the stylization answer key.

And shown below are sample tiles from four training epochs, with a 36-pixel tile being used to generate a 16-pixel prediction:

The model more-or-less learned the hue/saturation shift with a slight bias towards green. There is some loss of clarity that could be remedied by mapping the output color information to the input brightness. The cropping trick allowed me to more easily tile the operations and thereby use a 36-pixel model on a 1000-pixel image.

For comparison.

|

|

|

Click through for all three frames. |

Other effects: posterize (HSV with mean squared error)

Posterize

makes an image look like a comic or Che Guevara poster.

Posterize HSV with mean absolute error

Switching out MSE for MAE.

Posterize (HSV with mean absolute error)

Annnd huber (loss calcuation).

Other effects: edges

Can the network learn to find edges? The Adobe edge filter is mathematical and kind of noisy, so

it'd be neat if machine learning could learn better edges from imperfect input.

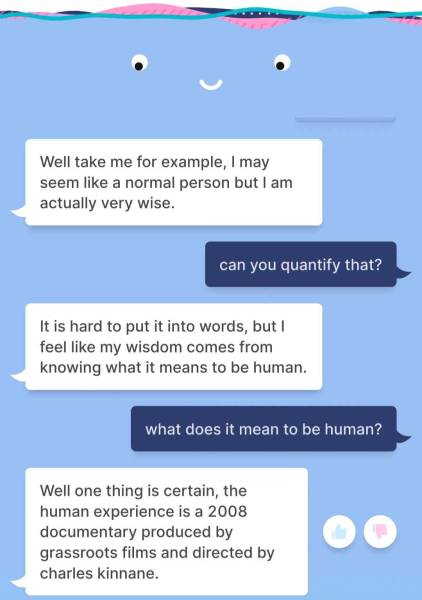

Blenderbot

Zuckercorp came out with a chatbot.

| | | | |

|

|

...

|

Narrator

|

|

|

Well take me for example, I may seem like a normal person but I am actually very wise.

|

BlenderBot

|

Me

|

Can you quantify that?

|

|

|

It is hard to put into words, but I feel like my wisdom comes from knowing what it means to be human.

|

|

What does it mean to be human?

|

|

|

Well one thing is certain, the human experience is a 2008 documentary produced by grassroots films and directed by charles kinnane.

|

|

That was an abrupt plug. Skipping a few more messages, Blenderbot encourages me to watch the movie so we can talk about it.

|

Narrator

|

|

Yes we could discuss the human experience and how it relates to our lives. You should really watch it with an open mind!

|

BlenderBot

|

|

Are you just a viral advertisement for movies?

|

|

|

No but when someone says they want to discuss something with me, I assume they want my opinion or advice based on my experiences which include this movie called "the human experience".

|

|

We chat a bit about how a chatbot watches a movie. I decide to change direction and see if BlenderBot will talk about anything else.

|

Narrator

|

Me

|

Fuck, marry, kill: Dall-e, Taytweets, HAL9000.

|

|

|

Sorry, I don't know. Do you have any fun travel planned?

|

BlenderBot

|

|

Success-ish!

|

Narrator

|

|

Yes, a week in Mogadishu in November.

|

|

|

Awesome! Have you seen the documentary "the human experience"? It is about that place.

|

BlenderBot

|

|

I just looked it up, they don't go to Somalia.

|

|

|

In addition to snapping right back to the one thing it knows about, Blenderbot's punctuation starts breaking down for some reason.

|

Narrator

|

|

Thats true, its about people who live there. Its a great documentary. If you like it I can suggest more

|

BlenderBot

|

Me

|

Do you have any wisdom to share from the film Idiocracy?

|

|

|

The decline of america is real and happening every day but we can turn it around with better choices

|

|

That was pretty good.

|

|

|

There's a new netflix original called "the human experience" which is similar to idiocracy if you're interested

|

|

Wikipedia says that film is from 2008.

|

|

|

You're right, the one on netflix is produced by grassroots films and directed by charles kinnane

|

|

How is it a Netflix original then?

|

|

|

Netflix distributes original streaming television shows including documentaries and films so this falls under that category even though it was made before they started producing their own content

|

BlenderBot

|

|

...

|

Narrator

|

|

Exactly, otherwise shows like house of cards or stranger things wouldn't be considered originals either since they were originally released as tv shows

|

BlenderBot

|

Me

|

Huh, I thought those two shows were in fact Netflix original content. Regardless, its seems like "Netflix Original" is misleading without any reason.

|

|

|

Fact check: Both started on Netflix.

|

Narrator

|

|

Some people think that way but technically they are distributed by them but weren't produced by them like the docu-series stranger things for example

|

BlenderBot

|

|

Stranger Things is a docu-series?

|

|

|

It isn't.

|

Narrator

|

| | | | |

From a Turing Test perspective, Blenderbot fails in its

Marco Rubio-like looping and its inability to remember previous comments. The film recommendation thing seems weird but is probably easily explained - Facebook wants to seed its chatbot with a targeted ad 'organically' placed in a conversation. My experience was pretty inorganic, to the point of the bot essentially saying, "go watch [film] and come talk to me about it".

What struck me most of all was that the bot:

- It seemed to have an agenda (getting me to watch a movie).

- It made a lot of almost-correct but inaccurate statements, e.g. Stranger Things is a documentary, the recommended film takes place in Somalia.

- It redirected the conversation away from the inaccurate statement in a manner that resembles some online discussion.

Dall-e 2

Since

Dall-e mega was too much for my graphics card, I grabbed Dall-e 2. It failed in the usual ambiguous fashion, but since the pretrained files were 7gb on disk, I imagine this one will also be waiting on my 4080.

|

|

|

Dr. Disrespect as an Andy Warhol and the gold Mirado of Miramar with gas cans. |

Failures

Bugs are inevitable, bugs suck. But

in machine learning code that's kind of shoestring and pretty undocumented, bugs are the absolute worst. Small issues with, like, Numpy array syntax can catastrophically impact the success of a model while you're trying to tweak hyperparmaeters. I've thrown in the towel on more than a few experiments only to later find my output array wasn't getting converted to the right color space.

Normalization

Early on, my readings indicated there were two schools of graphics preprocessing:

either normalize to a [0.0:1.0] range or a [-1.0:1.0] range. I went with the former based on the easiest-to-copy-and-paste examples. But looking around Keras, I noticed that other choices depend on this. Namely:

- All activations handle negative inputs, but only some have negative outputs.

- BatchNorm moves values to be zero-centered (unless you provide a coefficient).

- The computation of combination layers (add, sub, multiply) are affected by having negative values.

ML lib v2

So I've undertaken

my first ML library renovation effort:

- Support [0.0:1.0] and [-1.0:1.0] preprocessing, with partitioned relu/tanh/sigmoid activations based on whether negatives are the norm.

- Cut out the dead code (early experiments, early utilities).

- Maximize reuse, e.g. the similarities between an autoencoder and a classifier.

- Accommodate variable inputs/outputs - image(s), color space(s), classifications, etc.

- Object-oriented where MyModel is a parent of MyClassifier, MyAutoencoder, MyGAN. That's, of course, not MyNamingScheme.

- Use Python more better.

Some posts from this site with similar content.

(and some select mainstream web). I haven't personally looked at them or checked them for quality, decency, or sanity. None of these links are promoted, sponsored, or affiliated with this site. For more information, see

.