Covid update

What does a responsible reopening of society look like? I'm not sure anyone knows. Other than a few trips to work, I've gently transitioned to the front porch and then a ride out to get a milkshake and check out the gross red tide water and Windansea.

For

Jon's postponed bachelor party, we gathered around a virtual poker table from something like 9pm to 2am.

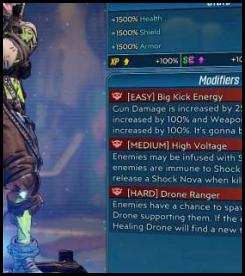

BL3: Revenge of the Cartels

Gearbox released another (free?) mini-DLC that is pretty Scarface-y. The downside was that on release

the boss was bugged to be invincible.

Divinity

As mentioned in a

previous installment, J and I left the starter island and have been adventuring on the Reaper's Coast. Having run away from a bunch of higher level enemies, I put some thought into

why I think the Divinity formula works.

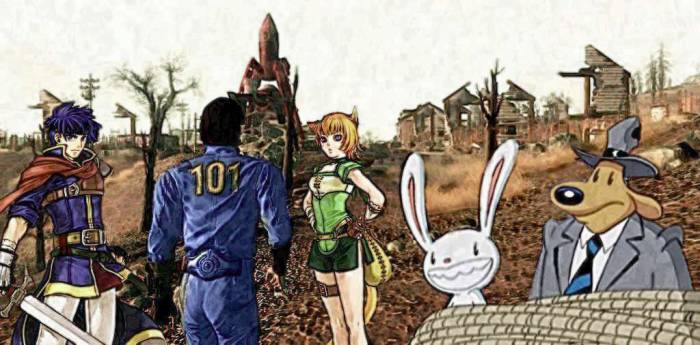

Divinity = Fallout + Fire Emblem + Sam and Max

- Divinity largely duplicates the RPG elements that aren't, obviously, exclusive to the Bethesda series. A roleplaying system would feel very different without skills and perks, but Divinity brings those

with a perk system that is even more gamechanging. More specific to Fallout, D:OS captures the unforgiving element that lets you wander into battles totally underleveled. Also it has a similar overworld/cave system where each is carefully planned and brimming with puzzles and lore.

Fire Emblem - the combat is tactical in both positioning and actions. A doorway can be the difference between a won battle and total disaster.

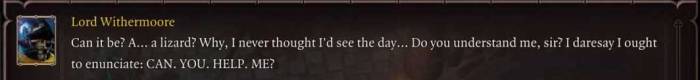

Sam and Max - D:OS has more than a little

intelligent and often zany dialogue. The writing is largely original but features callouts to literature and pop culture that are a bit more subtle than, say, Borderlands. And though it's not the most enjoyable experience, Divinity rewards you for scanning everything, sort of like clicking on everything on each screen of Day of the Tentacle.

Of course,

it's give and take. Divinity doesn't carry the twitch skill required for non-VATS Fallout combat. Its tactics system (particularly once blinks are unlocked) is only mildy positional. And it's not quite the guided, on rails experience of an early LucasArts toon game.

It shouldn't go without mentioning that

cheesing battles is both fun and shameful. The AI is subtle enough that it's hard to know what's going to happen from moment to moment, but with a few attempts at a harsh encounter you can typically find ways to abuse the scenario. These often involve abusing the simultaneous states of being in battle and out of battle/conversation, depending on the character.

High points

While cheesing battles is a guilty pleasure,

sometimes a good plan comes together. In the graveyeard there's a sorcerer dog (right?) that summons an undead hulk that can oneshot anyone who isn't a tank. The dog himself is pretty strong, but we whittled his physical armor down, at that point I cast shackles of pain.

Maybe good for some damage, sometimes you just cast it because you have an action point left. The enemies did their actions as we mildly watched while thinking about our next move. Then the XP award popped up and we exited battle. Mental rewind, what??? I burst out laughing. The undead hulk oneshot my character, doing so much damage that it also killed the shackled summoner. Battle over by pure accident.

There's a

particularly challenging battle in the Blackpits where you have both inextinguishable fire that heals enemies and a very suicidal NPC to protect. Looking online for tips, J found that people were calling the battle impossible and broken. Using the borrowed tactic of teleporting the NPC into a tent and placing a crate to block the door, we managed to succeed in our first attempt.

GBES

GBES rode again with a

backyard exploration that featured

Rouleur to-go crowlers...

... and three adorable growlers.

Coding: site meta

Kilroy had a little technical debt built up. This, combined with coffee and covid, resulted in a

Saturday that was something of a blur but ended with solved problems and new problems. First...

|

Issue

|

Fix

|

|

Tag pages are supposed to display an image and sometimes a text snippet from each associated post. Somewhere along the way, the tag pages and actual posts stopped agreeing on the preview image and there were broken links.

|

Generating everything anew helped with this. I also changed out the treatment of external content (linked images). From the perl markup days I had been truncating the often-lengthy source filenames. Now they're hashed. On the downside, I didn't propagate web traffic information to the new naming scheme.

|

|

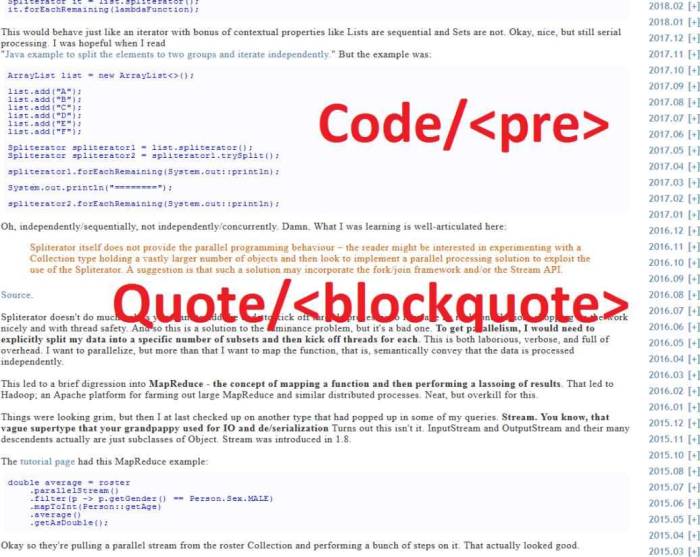

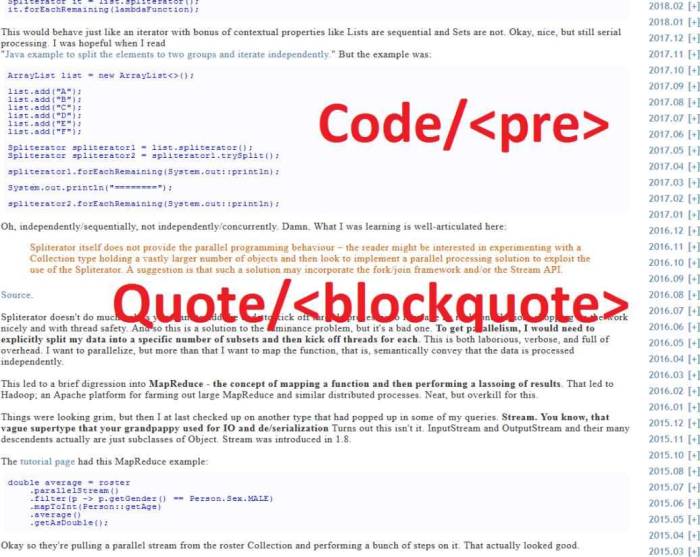

In spite of having a markup language that ties in to html generation, site infrastructure, and image processing, I was using 'blockquote' tags for quotes and 'pre' for code. This isn't terrible, but my markup processing was adding unnecessary linebreaks in them. For the sake of consistent parsing and future formatting, it makes sense to go full custom markup.

|

This was really just a matter of adding markup tags and changing existing stuff. I got a bonus trip down memory lane where I found what was left of the postprocessed Blogger scrape.

|

|

Spacing between elements was a bit haphazard. For example, gallery markup elements (a bunch of thumbnails) had a large vertical margin whereas images would not. I ended up inconsistently trying to address it in the markup file.

|

My markup model is, in short, a sequence of content containers, initalized with the content (local image, remote image, gallery, etc.) and used by calling a getHTML() that generates the final content. Adding a 'self_spaced' value to each element did the trick here by allowing the code that concatenates the getHTML() results to decide when a 'br' is necessary.

|

|

My thumbnail algorithm was not handling panoramas very well. Note to self: scale using shortest side rather than longest side.

|

Used shortest side for scaling, added a check for less than thumbnail size. This flowed into a bonus improvement wherein I scale the original image differently based on the thumbnail size. It really just required articulating that a thumbnail should be the most interesting x% of the photo, and be sure to adjust based on the output size.

|

|

Monochrome images weren't being processed correctly, I think this might have been an argb/rgba thing because it looked like one channel was getting dropped.

|

Fixed from recent graphics work, it just required re-generating posts.

|

The hot/top sidebar item was calculated based on current information. That is, it would read all logs up until now and list top all time visits and recent ones weighted by age. This clearly presents a problem in re-generating or updating old posts. To work around this, I had previously added the logic, "if hot/top exists in the destination html, re-use it". It's klugy.

Not only did this present some annoying corner cases in generating html, it meant that I had recent data for old posts (that pre-dated hot/top).

|

Each time the code generates a hot/top list, the log parser simply ignores content and hits that occurred after the specified date. It's kind of a 'duh' approach, but relied on previous work of enumerating all of content sitewide. It also means hot/top starts around 2014 when I started pulling server logs.

|

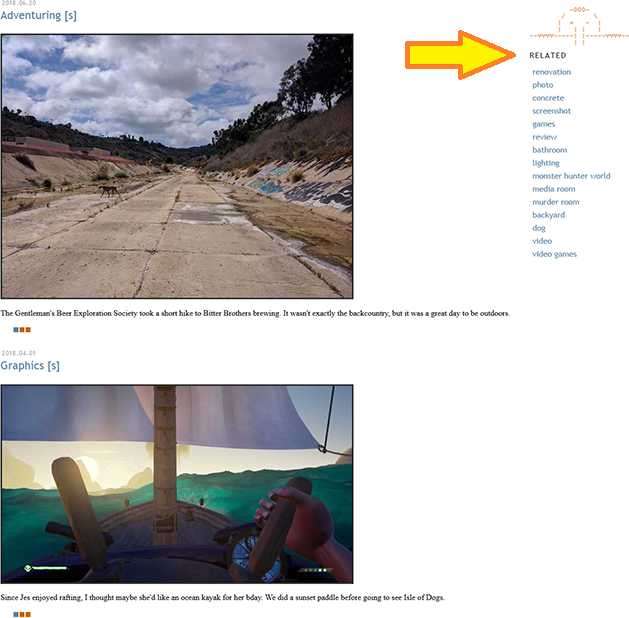

There are a lot of tags, each with their own html file (because js and php are yuck). For flow and file size constraints, I moved 'related tags' (a band-aid for irregular tagging) to the navigation bar. This required

finally creating a home link - the kilroy/bpf graphic in glorious ascii form.

-000-

/ \

| + - |

| | | |

--vvvv-----| |-----vvvv--

| |

Coding: graphics

Web site coding flows in and out of graphics coding. I picked up

where I left off, kind of.

I was thinking about histograms and color spaces and wondered about how many 16,777,216 representable rgb colors an image actually used. With the idea of style transfer, filters, and posterizing in mind, I thought about how I might

map the colors of one image onto another.

General implementations probably exist in Adobe products, and it's the foundation for:

|

Tone mapping is a technique used in image processing and computer graphics to map one set of colors to another to approximate the appearance of high-dynamic-range images in a medium that has a more limited dynamic range.

|

For more examples and why I hate tone mapping, visit

/r/shittyHDR. On the other hand, tone mapping!

One of the main applications (other than making boring photos look different) is to

stylize a photo into a different/compressed color scheme. I started simple: take a photo (top left), take a few colors (top right), replace each pixel with the closet intensity value from the new color space. That is, I wanted the output image to have the darks still be darks and lights be lights but use whatever color is closest in the alotted space. Since the intensity for #ff0000 is the same as #00ff000, there is a secondary matching of the closest RGB delta once the intensity is matched.

I quickly found that jpg compression creates a wide range of in-between colors, even from a very contrasty source. E.g.

JPG: 438 intensities for 764928 values.

BMP: 51 intensities for 764928 values.

Even the bitmap flavor had what was probably antialiasing. Good? Bad? Well, it can be manipulated.

Here's a crazy one. Using the RGB values from Super Mario Bros in jpg form, a Horizon screen can be almost exactly reproduced. Honestly I'm 50% that I miscoded something, though I should say the algo used the reddish ground that was chopped from the screenshot. But

if I take that bafflingly large SMB color space and remove all values that aren't highly represented, mapping Aloy to Mario produces the reduced color space you'd expect. Same code so... I guess it wasn't buggy.

Okay, what about less artsy tone maps?

I hit go on a few photos, mapping a->b and b->a. Toning changes can be ever so slight since the color palettes are large.

Taking a step back, my approach was to match an input color to one that has an identical intensity, choosing the closest actual value if possible. This could result in a lot of identical input/output, and some oddballs where you're forced to a very different color because that exact intensity isn't represented. The algorithm could be softened up.

But what about shifting gears and

instead of primarily matching intensity we primarily match hue? You could have lights replacing darks but the colors will be as close to the original as possible.

Again, jpg minimizes any transformative effect until we start pruning the outliers. When you do, you see a bush pop up in Vale's knee pucks, Laguna Seca runoff matching [?] boxes, and Red Bull being recreated with the sky.

The experiment was fun, even if it's a bit of a rabbit hole.

|

The president has been kidnapped by ninjas. Are you a bad enough dude to rescue the president? |

� |

From Bad Dudes Vs. DragonNinja (pic unrelated) |

Some posts from this site with similar content.

(and some select mainstream web). I haven't personally looked at them or checked them for quality, decency, or sanity. None of these links are promoted, sponsored, or affiliated with this site. For more information, see

.